|

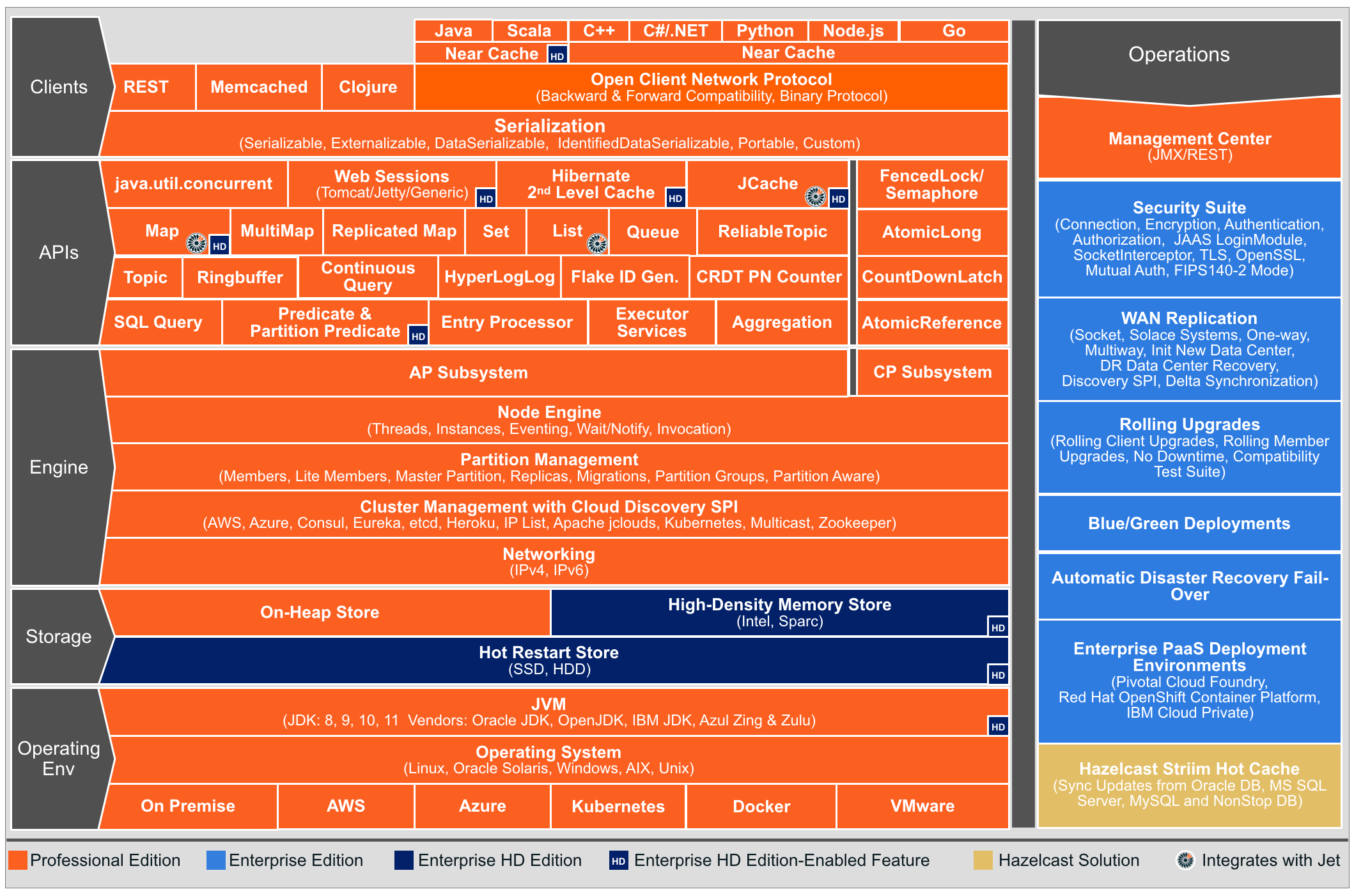

Since many applications often have repetitive data access patterns, using Hazelcast as a data layer between applications and your disk-based databases will dramatically improve access times. Data-intensive applications are often limited in performance by I/O bottlenecks. Built-in capabilities for continuity and security ensure the system is reliable and protected.ĭatabase acceleration. Continuous querying can enable real-time decision support. Static reference data and dynamically changing mission data can be combined and stored in-memory across a cluster of hardware servers to provide a high-speed analytics repository. Data can be shared as needed, or isolated/secured by using built-in role-based access controls.Ĭommon operational picture. Hazelcast pools RAM from multiple hardware servers in a cluster, enabling a large in-memory data cache that can be leveraged by multiple applications and teams. Instead of building a separate cache per application, a centrally managed system can provide an API-based caching service. To accelerate access to commonly used data, Hazelcast enables a large common data repository with in-memory speeds. Hazelcast provides system acceleration to a wide variety of solutions, running on-premises, at the edge, or in the cloud (including multi and hybrid clouds). Hazelcast uses data-locality strategies so that each task operates on the data on the same node, thus minimizing data movement across the network to significantly reduce latency. Both Hazelcast Jet and Hazelcast IMDG have provisions to deliver software code to each node in the cluster, which simplifies the development of distributed applications. Hazelcast provides distributed batch processing capabilities via the API mentioned above, that breaks down large-scale workloads into smaller tasks that are run in parallel to take advantage of all the hardware resources in the cluster. It is packaged as a software library with an application programming interface (API) that lets software engineers transform, enrich, aggregate, filter, and analyze data at high speeds.ĭistributed batch processing. It is typically used for taking action on an unlimited, incoming feed of data (i.e., a “data stream”). It coordinates the processing effort across multiple networked machines as a cluster by assigning subtasks to each of the computers. Hazelcast offers a distributed stream processing engine ("Hazelcast Jet") that is designed for high-speed processing of large volumes of data. It has many built-in optimization strategies that help reduce network accesses when retrieving data to deliver the highest data access speeds.ĭistributed stream processing. It coordinates the access of data objects across a cluster of networked computers, thus pooling together the RAM from the computers into one large virtual block of RAM. Hazelcast offers an in-memory data grid (IMDG) as part of its platform ("Hazelcast IMDG"), which can be used as a high-speed data store that leverages SQL or the data object-based API. The platform consists of two components (" Hazelcast IMDG" and " Hazelcast Jet") which enable high performance via three main capabilities:

Hazelcast is used to augment any system that requires higher throughput and/or lower latency data processing. It is available as open-source software, licensed software with advanced features, and as a fully managed cloud service on all the major cloud providers.

The Hazelcast In-Memory Computing Platform ("Hazelcast Platform") is an easy-to-deploy, API-based software package that accelerates large-scale, data-intensive infrastructures in the cloud, at the edge, or on-premises. Hazelcast is headquartered in San Mateo, CA, with offices across the globe. Featuring real-time streaming and memory-first database technologies, the Hazelcast platform simplifies the development and deployment of distributed applications on-premises, at the edge, or in the cloud as a fully managed service. Hazelcast is the fast, cloud application platform trusted by Global 2000 enterprises and government agencies to deliver ultra-low latency, stateful, and data-intensive applications. Machine learning inference / artificial intelligence.Our flexible data platform simplifies the deployment of modern architectural patterns such as: Programmatic extract-transform-load (ETL).We help with a wide variety of use cases that operate on large volumes of time-sensitive data, including: DLT and Hazelcast have partnered together to deliver high performance software systems that address the large-scale data processing requirements at your agency.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed